Examples of Neural Networks

As an example, Neural networks consist of interconnected nodes or artificial neurons organized into layers, which work together to process and learn from input data. The goal of a neural network is to recognize patterns or make predictions based on the data it has been trained on.

There are generally thought to be three main types of layers in any given neural network:

Input layer: This is where the raw data is fed into the network. Each node in this layer corresponds to a feature or variable in the input data.

Hidden layers: These are the layers between the input and output layers, where the actual processing and learning occur. The neurons in these layers apply various mathematical transformations, weights, and biases to the input data, enabling the network to learn complex patterns and representations. The number of hidden layers and the number of neurons in each layer can vary depending on the problem being solved.

Output layer: This is the final layer of the network, which produces the output or prediction based on the learned patterns. The number of neurons in this layer depends on the desired output format, such as a single value for regression problems or multiple values for classification problems.

The connections between neurons in the network have associated weights, which represent the strength of the connection. During the training process, these weights are adjusted to minimize the error between the network's predictions and the actual target values. This is typically achieved using a technique called backpropagation, which involves calculating the gradient of the error with respect to each weight and updating the weights accordingly.

Neural networks have achieved state-of-the-art performance in many domains, making them a popular choice for solving complex problems in artificial intelligence and machine learning.

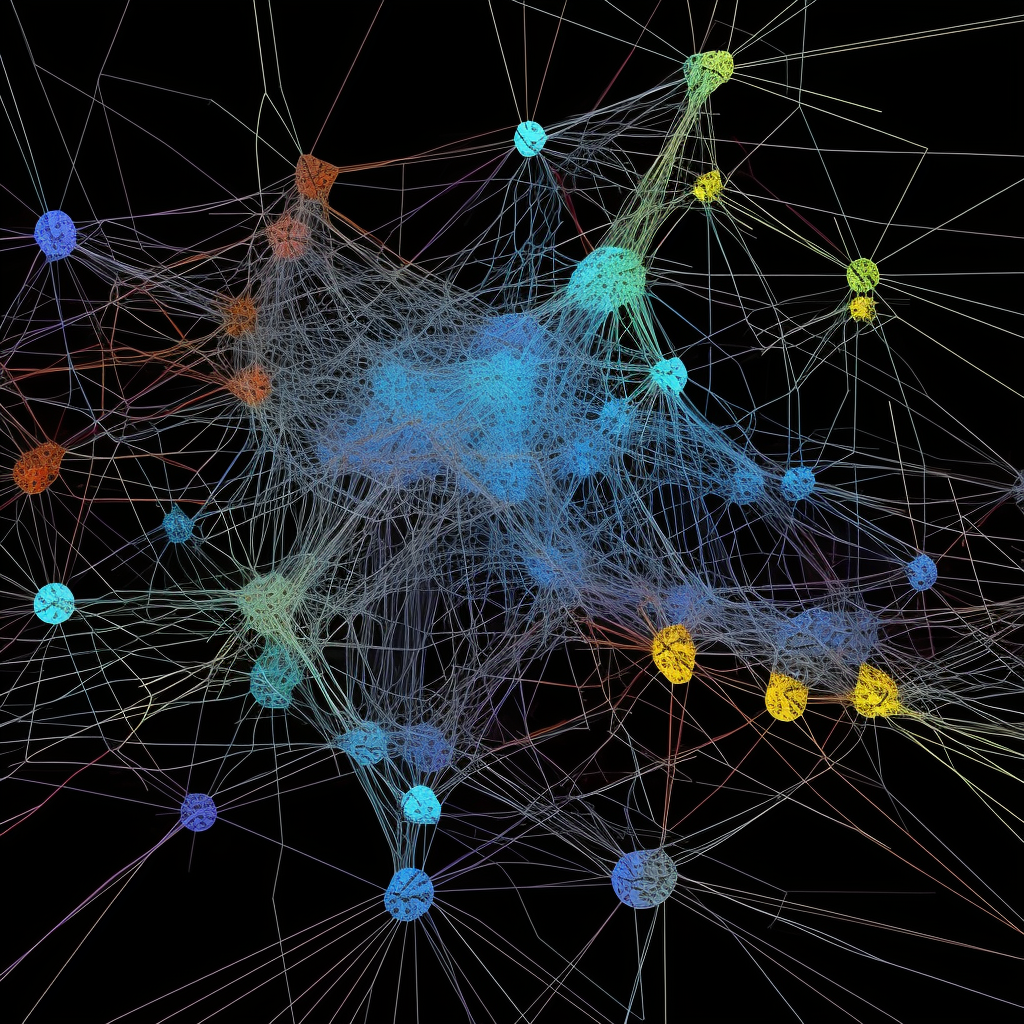

As neural networks have evolved, various architectures and types have been developed to address specific problems or improve performance. Some of the notable types of neural networks include:

Feedforward Neural Networks (FNN): These are the simplest type of neural networks, where the information flows in one direction from the input layer through the hidden layers and finally to the output layer. There are no loops or cycles in the network, which makes them straightforward to implement and train.

Convolutional Neural Networks (CNN): These networks are designed for processing grid-like data, such as images or time series data. CNNs use convolutional layers to scan the input data with filters or kernels, detecting local patterns or features. This enables the network to learn hierarchical representations, with lower layers detecting simple features and higher layers detecting more complex patterns. CNNs are widely used in computer vision tasks, such as image recognition and object detection.

Recurrent Neural Networks (RNN): These networks are designed for processing sequences of data or data with temporal dependencies. RNNs have connections that loop back on themselves, allowing the network to maintain a hidden state that can capture information from previous time steps. This makes them suitable for tasks like natural language processing, speech recognition, and time series analysis. However, RNNs can suffer from issues like vanishing or exploding gradients, which make it difficult to train them on long sequences.

Long Short-Term Memory Networks (LSTM): LSTMs are a type of RNN that addresses the issue of vanishing gradients by introducing a memory cell and a set of gating mechanisms. These allow the network to learn long-range dependencies more effectively. LSTMs have been successfully applied to a wide range of sequence-to-sequence problems, such as machine translation, text generation, and sentiment analysis.

Transformer Networks: These networks have become increasingly popular in natural language processing tasks due to their ability to handle long-range dependencies and parallelize training more effectively than RNNs. Transformers use a self-attention mechanism to weigh the importance of different parts of the input sequence, allowing them to capture complex patterns and relationships. The most famous example of a transformer-based model is OpenAI's GPT series, which has achieved state-of-the-art performance in various NLP tasks.

Neural networks have demonstrated remarkable success in a wide range of applications and continue to be a driving force in the field of artificial intelligence. As research progresses, it is likely that new architectures and techniques will be developed to further improve their capabilities and performance.